DANIEL ALLEN CRAIG

B.A., University of Iowa, 1997

M.A., University of Illinois, Urbana-Champaign, 2001

The roar of technology can be heard in every hall and classroom in the nation. Schools are in a rush to "computerize" everything from classrooms to testing. Research in the effectiveness of these moves has been brushed to the wayside and scientific scrutiny has given way to blind obedience. It is time to step back and investigate the basic tenants of computerization. This study focuses on the issue of handwriting quality and word-processing as biasing factors in English as a Second Language testing, specifically the English as a Second Language Placement Test (EPT) at the University of Illinois, Urbana-Champaign. Four expert EPT raters rated a total of forty essays, twenty original and twenty transcribed in either messy or neat handwriting or on a word-processor. Results indicate an interesting biasing effect for word-processed transcriptions. Word-processed transcriptions were scored lower than their handwritten counterparts regardless of original legibility. No such effect was found for handwriting legibility, though regardless of legibility handwritten transcriptions were rated higher than their original counterparts.

|

Table of Contents:

|

|||

| Introduction | 1 | ||

| Literature Review | 7 | ||

| Method | 20 | ||

| Results | 24 | ||

| Discussion | 28 | ||

| Conclusion | 33 | ||

| References | 34 | ||

| Appendix A | EPT Benchmarks | 37 | |

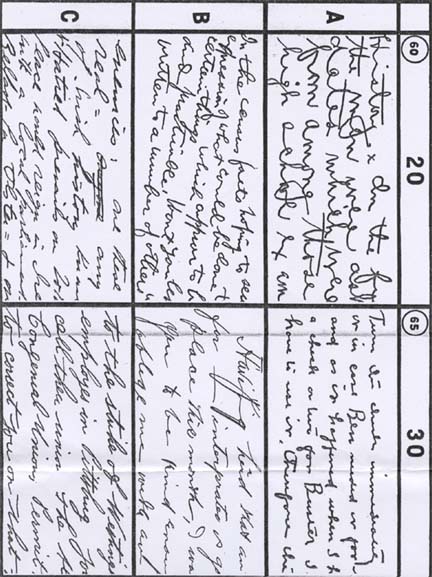

| Appendix B | Ayres Handwriting Scale | 40 | |

| Appendix C | Rater Instructions | 44 | |

| Appendix D | Informed Consent Form | 45 | |

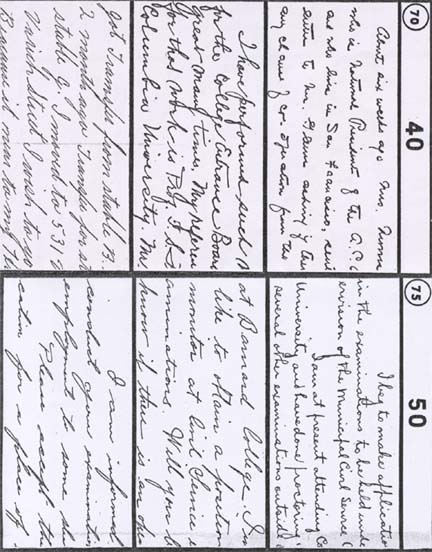

| Appendix F | Neat Examples | 46 | |

| Appendix E | Messy Examples | 47 | |

Had there been good answers to these questions further study would not have been warranted. An exhaustive review of the literature was thus undertaken, which left many questions yet to be answered. No definitive evidence on the effects of handwriting legibility and/or word-processing in ESL contexts was presented, though various studies arrived at the same answers.

The validity of holistic rating must first be called into question. The EPT

is rated holistically, but what is holistic rating? The rating is generally

impressionistic (Cooper, 1977; Odell & Cooper, 1980; Charney, 1984) and

not surprisingly, sometimes faulted. Reading & score reliability (Charney,

1984; Diederich, 1974; Huck & Bounds, 1972; Stalnaker, 1934) were major

issues represented in many studies. While most of these issues seem to be effectively

dealt with by providing ample rater training and calibration (Charney, 1984;

Stalnaker, 1934), other problems persist involving rater bias. Rater bias on

holistic scoring comes in many forms, for example gender (Bull & Stevens,

1979), rater's handwriting quality (Huck & Bounds, 1972) and writer's handwriting

quality (Briggs, 1970; Chou, Kirkland, & Smith, 1982; Hughes, Keeling, &

Tuck, 1983; James, 1927; Markham, 1976; Powers, Fowles, Farnum, & Ramsey,

1994; Sloan & McGinnis, 1978; Sweedler-Brown, 1992; Brown, in press).

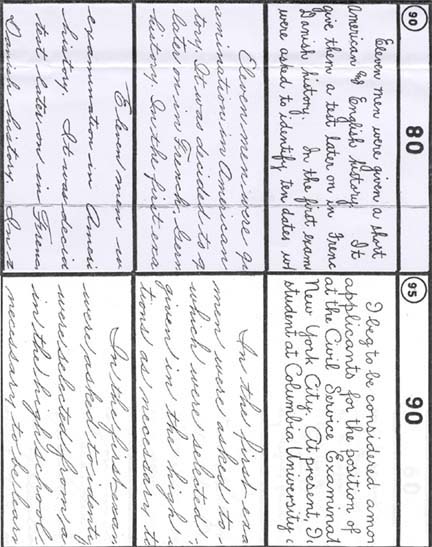

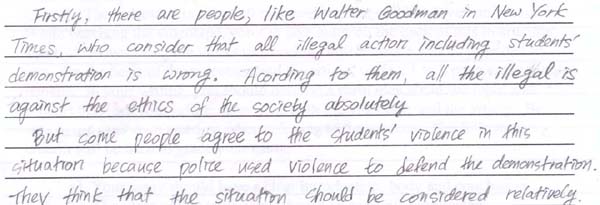

Since a major part of this study revolves around handwriting legibility, something

should be said about its measurement. The Ayres Measuring Scale for Adult Handwriting

(Ayres, 1920) is mentioned in many studies regarding handwriting quality. This

scale is scored from 20 to 90 and is accompanied by three handwriting samples

for each possible score (Appendix B). Other methods of legibility judgment were

attempted in various studies, including group rating (Brown, in press; Hughes,

Keeling, & Tuck, 1983; Powers, Fowles, Farnum, & Ramsey, 1994) and arbitrary

judgment (Briggs, 1970; Markham, 1976; Sloan & McGinnis, 1978; Sweedler-Brown,

1992). Even with the assistance of the Ayres scale or others like it, the determination

of whether an essay is "neat" or "messy" comes down to the

impression of the reader.

Previous studies have provided a multitude of answers to questions regarding

the effects of legibility and word-processing on essay ratings. Unfortunately

those studies have not always concurred on whether neat or messy handwriting

result in higher or lower scores. Nor did they determine whether handwritten

or word-processed essays receive higher or lower scores.

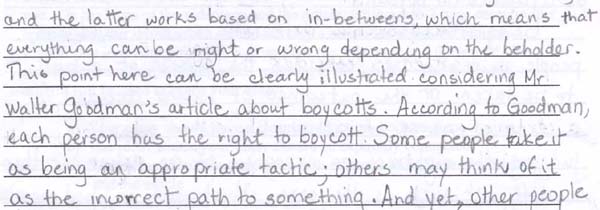

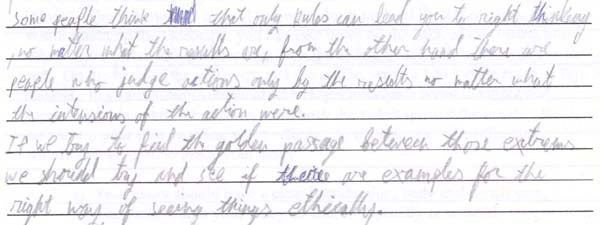

A number of studies came to the conclusion that neat handwriting is scored higher

than messy handwriting (Briggs, 1970; Chou, Kirkland, & Smith, 1982; Hughes,

Keeling, & Tuck, 1983; James, 1927; Markham, 1976; Powers, Fowles, Farnum,

& Ramsey, 1994; Sloan & McGinnis, 1978; Sweedler-Brown, 1992), but at

least one study (Brown, in press) concluded that messy handwriting benefits

the writer, in that the raters gave essays with poor legibility higher scores

than essays with better legibility. Whether raters prefer neat handwriting or

messy handwriting makes no difference, any preference highlights the necessity

to educate raters on the neat/messy bias in rating essays.

Whether or not neat handwriting is rated higher than messy handwriting may be a non-issue in today's technologically advanced educational institutions. Everything from university applications to ordering textbooks is done via computer. Word Processors and e-mail have dominated the arena of correspondence, as well as classrooms for some time now. This trend has not escaped ESL proficiency testing. Even now, the viability of computerized EPT testing is being investigated and is logically not far from fruition. Learning from other's past mistakes, the University of Illinois, Urbana-Champaign is not in a rush to jump onto the computer bandwagon. Technology for technology's sake is too often the norm. Educators must step back and determine what the possible benefits and drawbacks are in the computerization of a test like the EPT.

The second part of this study focuses on the effect of rater bias in relation to handwritten versus word-processed essays. A majority of studies conclude that handwritten essays are rated higher than word-processed essays (Arnold, Legas, Pacheco, Russell, & Umbdenstock, 1990; Bridgeman & Cooper, 1998; Brown, in press; McGuire, 1996; Peterson & Lou, 1991; Sweedler-Brown, 1991; Sweedler-Brown, 1992). One interesting study (Marshall, 1972) found that handwritten essays were rated lower than typed essays when both essays were free from compositional errors. Unfortunately this is usually not the case in most ESL contexts where the majority of errors are compositional. Rating handwritten essays higher than word-processed essays is not a problem if the assessment is uniformly handwritten or word-processed, but most likely the movement from handwritten EPT to word-processed EPT will happen in stages. Removing the possibility of handwriting the EPT could disadvantage many incoming students whom have had little access to computers or poor typing skills.

Holistic Rating

The researcher investigated holistic rating in order to understand better the

background behind the EPT rating system. This resulted in some clarifications

as well as some confusion.

The EPT is rated holistically, but this generic term encompasses various methodologies

of rating, reflecting varying philosophies. Two very rewarding overviews of

these methodologies are Cooper (1977) and Odell & Cooper (1980). In these

papers, the authors give a general overview of what holistic rating is (Cooper,

1977), as well as some of the methodologies used in holistic rating (Cooper,

1977; Odell & Cooper, 1980). Cooper (1977) states that "Holistic evaluation

of writing is a guided procedure for sorting or ranking written pieces"

(p. 3). He goes on to explain that holistically rated papers are matched with

other papers in a range, scored for certain features, or assigned a grade. EPT

rating falls under this general description, thus we will refer to it as holistic

rating.

Odell and Cooper (1980) further break this distinction down into General Impression

scoring (ETS), Analytic Scale (Diederich) scoring, The Assessment of "Relative

Readability" (Hirsch), and Primary Trait scoring (Lloyd-Jones). Unfortunately

the EPT scoring method does not fall neatly into any of these categories. Like

General Impression scoring and the Analytic Scale, EPT training and calibration

employs "range finders". These papers cover the possible values of

essays and are supposed to help the raters internalize the various qualities

of these samples. Unlike General Impression scoring though, the EPT rating system

utilizes "benchmarks" as general criteria for rating. These benchmarks

are not rated individually though, as in the Analytic Scale. The qualities of

the essay as a whole are evaluated, similar to Primary Trait scoring. Primary

Trait scoring entails analysis of the prompt and audience; where the EPT rating

system does consider the audience, the prompt receives little consideration

from the rater.

This leaves us with a composite of various methodologies in forming the EPT

rating system. An ambiguous definition as this simply brings us back to "holistic"

rating as the best term to describe the EPT rating process.

For a rater to reliably and consistently rate essays, they must be free from outside influences and biases. The question of whether a rater can be truly reliable is prominent in establishing holistic evaluation as valid. There are numerous studies establishing holistic evaluation as valid and reliable when using trained and calibrated raters in the process (Cooper, 1977; Charney, 1984; Stalnaker, 1934), but others show weaknesses in holistic evaluation (Diederich, 1974; Huck & Bounds, 1972; Smith, 1993).

The environment in which rating takes place and the training the raters have

received is a major concern in holistic evaluation. Cooper (1997) states that

"reliability can be improved to an acceptable level when raters from similar

backgrounds are carefully trained" (p. 18).

This is similar to what Stalnaker (1934) found. When evaluating rater reliability

in a school assessment Stalnaker noticed that reliabilities were as low as .30,

leading him to note that "these reliabilities indicate that the mark a

student receives is more dependent on the mood of the reader than on the quality

of the student's writing" (p. 219). This problem seemed to be alleviated

when training and standards were introduced into the process. After this reliabilities

averaged .88.

Charney (1984) states that in order to prepare raters for this type of rating

the training process must include certain aspects, "the aspects of training

sessions that are intended to insure that readers use the right criteria are

peer pressure, monitoring, and rating speed" (p. 73). Charney believes

that the circumstances involved in holistic rating (number of essays, speed

of rating, and monitoring) are beneficial to the rating process in general.

There are a number of factors that may make holistic assessment unreliable at

the level of the individual rater. One of these is rater bias. Bias comes in

many forms including gender, handwriting legibility, or simply expectations.

These biases are reflected in numerous studies, including Diederich (1974),

Huck & Bounds (1972), Smith (1993), and Hughes, Keeling, & Tuck (1983).

Diederich (1974) had 60 raters (10 college English teachers, 10 Social Science,

10 Natural Science, 10 Writers/Editors, 10 Lawyers, and 10 Executives) rate

essays by 300 first month college students at three colleges. The raters were

instructed to sort essays into nine piles according to merit, with at least

12 papers in each pile. Out of 300 essays, 101 received every grade possible

(1-9), 94% received 7, 8, or 9 different grades, and no essay received less

than 5 different grades. This demonstrates that untrained raters from different

backgrounds do not make reliable raters (Cooper, 1977).

Huck & Bounds (1972) in their research found that raters with neat handwriting

tend to rate neat essays higher than messy handwritten essays, but that raters

with messy handwriting tended to show no difference in rating neat or messy

handwritten essays. This is an interesting conclusion that no other study had

investigated.

Hughes, Keeling, & Tuck (1983) found that rater expectations of writer ability

do make a difference in the score an essay receives. 25 experienced teachers

scored essays by 38 high school students aged 13-14. The best, average, and

worst were selected for model answers. Handwriting samples were then created

by 26 children whom copied the essays in "messy" and "neat"

handwriting. Four of the especially neat essays and 3 of the especially messy

essays were chosen and these seven were rated on neatness (1 = best - 7 = worst)

by 84 university students. Experimental booklets were prepared, mixing the high

and low scores and neat and messy essays. 224 were then asked to mark a false

set of intelligence and achievement tests supposedly completed by the writers

of the essays. The raters were then asked to score the essays 1-7. The results

indicated that expectations did influence the scores that the essays received

as well as handwriting legibility (neatly handwritten essays scored better than

messily handwritten essays), but there was no correlation found between scorer

expectations and handwriting quality. This study could also suggest that essays

should be cleared of any marks indicating name or nationality, especially in

ESL contexts, to guard against rater bias in regard to expectations for certain

nationalities.

Most of these studies included no rater training and thus the bias in each situation

may be alleviated or eliminated by focused rater training. Later, I will discuss

differences in rating messy versus neat handwritten essays and handwritten versus

word-processed essays, which may not be aided by rater training.

Most teachers take it for granted that every class will contain a student or two with near-illegible handwriting. While most teachers can insist that students turn in work typed on a word-processor, testers have a more difficult time demanding that. Many students, especially in ESL, do not have the typing and/or computer skills necessary to take a timed, high-stakes test on a computer. Also, many tests are given in large group settings and many schools do not have adequate computer labs needed to administer them. Thus, handwritten tests are still necessary and the problem of legibility continues to exist. It is difficult to predict what effect this has on student scores and placement, but several studies have attempted to answer this question. Most conclude that messy handwriting results in lower scores (Briggs, 1970; Chou, Kirkland, & Smith, 1982; Hughes, Keeling, & Tuck, 1983; James, 1927; Markham, 1976; Sloan & McGinnis, 1978; Sweedler-Brown, 1992), while one found that neat essays received lower scores than messy essays (Brown, in press). Though many studies came to the same conclusion, they did it using various methodologies.

James (1927) studied 35 high school seniors in their second month of classes.

They wrote an essay during one class period. These essays were then rated by

teachers, using 70% for passing and 100% for perfect. These papers were then

copied into the opposite handwriting quality, using the "Thorndike scale".

These papers were graded two months after the first set of papers. James found

that papers with better handwriting quality received higher scores.

Briggs (1970) also found that neat handwriting received higher scores than messy

handwriting. Briggs chose 10 essays of similar ability from a total of 110.

These were then transcribed in 10 different handwriting qualities. These 100

essays were then rated from 1 to 10 in handwriting quality by 10 teachers. The

essays were then 10 other raters. They were instructed to rank the essays first

1-10, then give them a score out of 20, basing their score only upon total impression

of the essay as a whole.

Markham (1976) took 22 papers written by fifth grade students and transcribed

them on a word-processor to avoid biasing them while judging content quality

(this part of the procedure may be faulty and return skewed results, but we

will deal with that in the next section). 9 of the original 22 papers were chosen

to represent high, medium, and low content quality. These 9 were then transcribed

into 9 qualities of handwriting. The 81 resulting papers were grouped into 9

sets of 9 papers and were ranked 1-9 by raters. Markham concluded that papers

with better handwriting did consistently better than those with poor handwriting.

Some studies like Hughes, Keeling, and Tuck (1983) included rater expectations

into their study. 25 experienced teachers rated essays by 38 high school students

aged 13-14. The best, average, and worst were selected for model answers. From

these 26 children copied the essays in "messy" and "neat"

handwriting. Four of the especially neat essays and three of the especially

messy essays were chosen. These were then ranked 1 (best) through 7 (worst)

by 84 university students. The essays raters were given experiment packets with

high/low scores and neat/messy essays mixed. They were told to score on a set

of false intelligence and achievement test answer sheets created for each essay.

These were to create expectations for the essays and see how the intelligence/achievement

sheets affected the scoring of the essays. The researchers found that not only

did handwriting make a difference, neat handwriting rated higher than messy

handwriting, but rater expectations made a difference as well.

The only study that actually found that messy handwriting was rated higher than

neat handwriting was Brown (in press). Contrary to Brown's hypothesis, "bad"

handwriting was found to be an advantage in scoring. 40 IELTS essays were chosen

from five administrations of the test and five different prompts. Each was typed

with errors being retained. Each essay was rated six times with no one rating

the same essay twice. Legibility was rated from 1-6 as well as content. Finding

indicated that messy handwriting was rated higher than neat handwriting. Since

this is the only study that found these results, expectations that messy handwriting

will be scored higher than neat handwriting are low, but it is an interesting

experiment and will be reviewed further in the next section.

The next facet of our study concerns handwritten versus word-processed essays.

It seems that if neat handwriting if rated higher than messy handwriting, then

word-processed essays will be rated higher than handwritten essays. These expectations

seem to fall flat when looking at relevant research on the subject. Most studies

came to the conclusion that handwritten essays are rated higher than word-processed

essays (Arnold, Legas, Pacheco, Russell, & Umbdenstock, 1990; Bridgeman

& Cooper, 1998; Brown, in press; McGuire, 1996; Peterson & Lou, 1991;

Sweedler-Brown, 1991; Sweedler-Brown, 1992). One study by Marshall (1972), though,

found that typed essays received higher scores than handwritten essays, when

free of compositional errors.

The biggest difference between research on legibility bias and handwriting versus

word-processing is that the ladder tends to delve into reasons for the differences.

This is evident in the various research reviewed here.

Arnold, Legas, Pacheco, Russell, and Umbdenstock (1990) investigated two questions

with bearing on our current study. The first was whether rater bias skewed scores

on word-processed papers in holistic rating. The researchers converted 300 randomly

selected, previously scored, handwritten placement essays to word-processed

paper, including all errors in language and surface conventions. The converted

papers were rated and then the raters were surveyed to find out which type of

paper they preferred to read. The findings indicated that rater bias may have

skew the scores on word-processed papers. The word-processed papers were rated

lower than their handwritten originals and half of the raters indicated that

they preferred to read the handwritten versions.

The second research question asked what factors contributed to reader bias.

The researchers mixed word-processed papers with handwritten papers and had

them rated. Raters were then surveyed immediately afterward about their attitudes

about scoring each type of paper. Next, a follow-up discussion with the raters

of the essays was conducted about the differences between reading word-processed

papers and reading handwritten papers. The researchers found that readers preferred

scoring the handwritten papers, even though they were harder to read. They also

had higher expectations of word-processed papers.

The findings from both studies indicated that word-processed papers were rated

lower than handwritten papers (regardless of legibility) and a number of reasons

were given. The perceived length of essays was one reason. Since word-processed

essays appear shorter in length than their handwritten counterparts and thus

receive lower scores. Longer versions of word-processed papers were rated the

same as the handwritten versions. The rater survey also found that surface errors

were easier to notice in word-processed papers and that raters found it easier

to identify with handwritten essays. Finally, raters gave writers the benefit

of the doubt when the encountered difficulty in reading their handwriting. Though

some of these findings run counter to those find in the studies regarding handwriting

legibility, they are what one would expect from a rater survey.

Brown (in press) found that handwritten essays receive higher grades than word-processed

essays, with messy handwriting rated the highest. This would seem to agree with

the survey results in the previous study, but Sweedler-Brown (1992) found that

neat handwriting is rated higher than word-processed, but there was no difference

found between messy handwriting and word-processed. In this study, 27 essay

samples were taken from 700 (9 neat, 9 messy, and 9 word-processed). All samples

were transcribed leaving 81 total samples, which were mixed with filler essays

to throw off the raters. The Sweedler-Brown study seems to negate the factors

writer identity, length, and ability to notice compositional errors.

The Peterson and Lou (1991) study, though, strengthens the case for perceived

length as a factor in lower word-processed scores. In this study, 103 ninth

graders took a Direct Writing Assessment in Portland, Oregon. Their handwritten

papers were word-processed, retaining all errors. The essays were all scored

(original and word-processed) 1-5 for each of 6 traits: (1) ideas and content;

(2) organization; (3) voice; (4) word-choice; (5) sentence fluency; and (6)

conventions. While there were no differences between handwritten and word-processed

short papers, short papers were rated lower than longer papers. Interestingly,

the conventions trait was rated lower for word-processed papers.

In a study conducted by McGuire (1996), voice is found to be one of the traits

where word-processed essays rate higher than handwritten essays. 162 eleventh

grade students participating in the Kansas Writing Assessment in 1993 and 1994

wrote essays on computer then transcribed them in their own handwriting. 50%

of the essays were scored in word-processed form and 50% in handwritten form

using a six-trait analytical scale: (1) organization; (2) word choice; (3) sentence

fluency; (4) conventions; (5) ideas/content; and (6) voice. McGuire found that

handwritten papers scored higher than word-processed papers across the traits,

except for ideas/content and voice. The runs counter to the finding of Arnold,

Legas, Pacheco, Russell, and Umbdenstock (1990).

Sweedler-Brown (1991) found that content quality also affects the rating given

to word-processed essays. 70 essays from 700 university English exit exams were

word-processed, with 61 of each finally being used in the study. These 122 essays

were combined with a group of regular exit exams (about 85% handwritten and

15% word-processed). A scale of 1-6 was used, with the composite scores from

two raters being used as the actual score. The findings suggest that higher

content quality essays benefited handwritten essays over word-processed essays.

No corresponding effect was found for essays with lower quality content. This

could mean that in a situation like the EPT, essays which would normally be

exempt or even in the upper-level class, if word-processed may find themselves

in lower-level classes than should be necessary.

Bridgeman and Cooper (1998) came to a similar conclusion in their study. Used

data from 3470 examinees, which consisted of both handwritten and word-processed

essays. Handwritten essays were found to have higher scores than word-processed

essays, but that rater reliability was higher for the word-processed essays.

According to the authors, "moving from handwritten to word-processed essay

assessments would appear to have positive benefits in terms of enhanced reliability"

(p. 6).

Most these studies seem to suggest that using word-processed essays for EPT

assessment would be beneficial. Raters seem to rate word-processed essays more

reliably that handwritten essays, as well as assess what they set out to assess

(i.e.,content).

The sample essays used in this study came from actual EPT essays from a single session conducted in 1996. The rationale behind choosing essays from 1996 was that they were old enough to exclude any of the researcher's students, thus ensuring confidentiality. The essays were recent enough to have the current format of the present day EPT.

First, twenty essays were chosen at random based on handwriting quality. Ten

of the chosen essays were very neatly written and ten were messily written.

These were then divided into two groups of ten, each comprised of five neat

and five messy samples. These groups represented the two experiments: neat versus

messy handwriting (legibility) and handwritten versus word-processed essays.

The samples in the legibility group were then transcribed to the opposite style

of handwriting by seven volunteers with obviously neat or messy handwriting;

no transcriber copied more than two essays. The essays were copied exactly,

retaining formatting and composition errors.

The samples in the handwritten versus word-processed group were transcribed

by the researcher. The transcriptions retained all original characteristics

of the handwritten essays, including as much of the original formatting as possible.

The word-processed transcriptions were double-spaced in 12-point, Times New

Roman font.

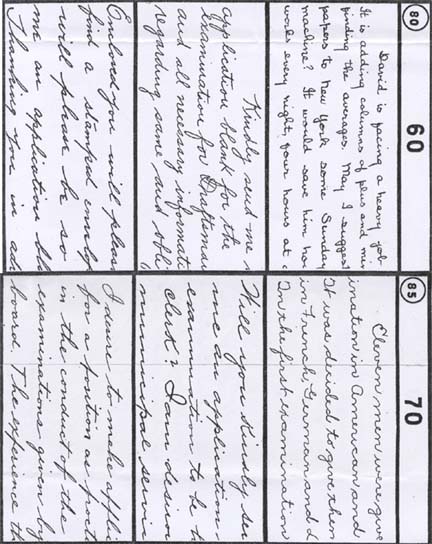

All samples, both original and transcribed, were then scanned in color at a high resolution (300 dpi) using a high quality scanner. All images were then edited to have a uniform appearance, removing any identifying marks or markings from the original rating, and providing each with an identifying number. The samples were then printed on a high quality color printer. This resulted in a uniform appearance, while still appearing to be an original.

Two rating sessions were arranged three months apart. Two raters were assigned to the legibility group and two raters to the handwritten versus word-processed group. Both groups had the same instructions, which were almost identical to those given to raters when rating the EPT. The rater instruction sheet is included in Appendix C. Raters were not told of the purpose for the study, only that they would be rating EPT essays. They were not told until after the second session was completed, when the purpose and method of the study was revealed to them. Prior to beginning the first rating session, though, all raters signed informed consent forms; letting them know their rights in the research process. The raters were also encouraged to ask questions regarding the experiment, but questions that could possibly affect the outcome of the study would be deferred until the experiment was concluded.

Raters were instructed to rate the essays as they would regular EPT essays.

EPT essays are assigned either too low, 400, 401, or exempt, which correspond

to students' placement within the service courses. There were two additional

notations that the raters were told to include, which are not established constituents

of EPT rating. First, raters were to assign plus (+)/minus (-) notations to

the 400 and 401 scores as a fine-tuning mechanism for the experiment, a procedure

first studied by Steward (1998). This is actually a normal part of the EPT rating

process that most raters employ when they are unsure of which score to give

to a borderline essay and are passing it on to a second reader. The second change

to the rating process was for raters to make notations on the essays themselves,

which reflect the rating process. They were encouraged to write any comments

on the tests that they considered in their rating process (i.e., disorganized,

awkward sentence/phrase, grammar errors, etc…)." This was done to

give the researcher insight into the raters' considerations during the rating

process; particularly, whether or not raters would comment on legibility. The

raters were also asked to avoid discussing the essays or the scores given to

an essay until both sessions of the experiment were completed. This was not

only to protect from group norming, but from the discovery that they were rating

the same, yet aesthetically different essays. The samples given to the raters

for each session were divided in two. During the first session, each rater received

half of the original essays and half of the transcribed essays, never rating

two of the same essays in one session. The raters received the other half of

the original and transcribed essays during the second session. This resulted

in both copies of the same essay, original and transcribed, being rated three

months apart, which was hoped to alleviate the variable of recognition when

rating essays with the same content.

The second session for the handwriting legibility group was discarded due to

research data management problems. A third session was held for the members

in the legibility group, where they rated the essays that they were supposed

to rate in the second session.

The final scores were converted to a 7-point scale for purposes of analysis,

with 400- being 1 and Exempt being 7. Therefore, on this scale a rating of 401

equals 5 and a rating of 400 equals 2. These scores were then analyzed, with

the results below.

7-Point Scale Conversion Chart

EPT score 7-Point Scale

400- 1

400 2

400+ 3

401- 4

401 5

401+ 6

Exempt 7

Table 1 - 7-Point Scale Conversion Chart

The results from these experiments can best be seen in a 2x2 relationship using the median scores of opposite transcriptions (neat handwriting transcribed to messy handwriting and messy handwriting transcribed to neat handwriting) and word-processed versions of neat originals and word-processed versions of messy originals.

Opposite Transcribed Essays Computer Transcribed Essays

Original Neat Essays Median Score = 5.0 Median Score = 3.4

Original Messy Essays Median Score = 4.1 Median Score = 2.9

Table 2 - 2x2 analysis of essay scores

Table 2 reveals an interesting effect between two of the categories: (1) original neat essays, which have been transcribed in messy handwriting and (2) word-processed originally messy essays. Messily transcribed originally neat essays showed an elevated median score (5.0), while word-processed messy essays, showed a rather depressed median score (2.9).

Original Essays Opposite Essays

Original Neat Essays Median Score = 4.5 Median Score = 5.0

Original Messy Essays Median Score = 3.5 Median Score = 4.1

Table 3 - Original Essays versus Opposite Transcriptions

Table 3 charts the median scores given to original neat and messy essays and

their opposite transcriptions. The results indicate that originally messy essays

scored lower than originally neat essays and that transcribed essays received

higher scores than their original counterparts. Of these, the highest scoring

essays were originally neat essays, which had been transcribed into messy handwriting.

Original Essays Word-Processed Essays

Original Neat Essays Median Score = 4.1 Median Score = 3.4

Original Messy Essays Median Score = 4.5 Median Score = 2.9

Table 4 - Original Handwritten Essays Versus Word-Processed Essays

Table 4 charts the median scores given for original essays, both neat and messy, and the word-processor transcribed essays. The results indicate that word-processed essays received lower scores in both the neat original and messy original categories. Surprisingly, originally messy essays were rated highest (4.5) while their word-processed transcriptions were rated lowest (2.9).

Neat to Messy Handwriting

No Change 30% (3)

Higher 40% (4)

Lower 30% (3)

Table 5 - Score Change from Neat to Messy Handwriting

Messy to Neat Handwriting

No Change 10% (1)

Higher 60% (6)

Lower 30% (3)

Table 6 - Score Change from Messy to Neat Handwriting

Tables 5 and 6 chart the percentage of essays changed in score in each legibility group. The numbers show an interesting trend where, regardless of legibility, transcribed essays receive higher scores. The difference is greatest in the transcription from messy to neat handwriting, though the pattern still exists in transcriptions from neat to messy handwriting.

Handwritten to Word-Processed

No Change 20% (4)

Higher 10% (2)

Lower 70% (14)

Table 7 - Score change from handwritten to word processed essays

Table 7 displays the percentage of essays that were rated higher, lower, or underwent no change when undergoing the change from handwritten to word-processed versions. The results clearly show that a majority of word-processed essays were rated lower than their handwritten counterparts.

Neat Handwritten to Word-Processed

No Change 30% (3)

Higher 10% (1)

Lower 60% (6)

Table 8 - Score change from neatly handwritten to word-processed essays

Messy Handwritten to Word-Processed

No Change 10% (1)

Higher 10% (1)

Lower 80% (8)

Table 9 - Score change from messily handwritten to word-processed essays

Tables 8 and 9 break table 7 into two variables, neat handwriting and messy handwriting. Both tables show that regardless of original handwriting quality, word-processed essays are rated lower. The greatest difference exists in messy originals, with 80% of transcribed essays receiving lower scores than their handwritten counterparts.

In English as a Second Language situations, does handwriting legibility make

a difference in assessment scores and what effect does the use of word-processed

essays have on rater assessment? These research questions seem to have been

answered, with very interesting results.

The data from the legibility group clearly shows that all handwritten transcriptions

were scored higher than their original counterparts. The reason for this pattern

in unclear. Since it does not seem that handwriting legibility was a large factor,

it may be that the very act of transcription elevates scores. This is difficult

to comprehend because all essays were precisely transcribed with only the legibility

of the essays altered; no grammar, spelling, or compositional errors were corrected

in the transcriptions. Transcription in this manner may have resulted in a cleaner,

more polished appearance for the essays, regardless of handwriting legibility,

thus causing them to receive higher scores.

The findings of past studies indicating that handwriting (usually neat handwriting) results in elevated scores are not supported by this study. Transcribed scores were higher across the board; the difference in scores from original neat to messy transcribed was .5, with a similar result found for original messy to neat transcribed at .6. This seems to indicate that handwriting quality has little to do with the scores received in this study.

An analysis of the data from the handwritten versus word-processed group contains some very interesting information. Word-processed essays scored lower than their handwritten originals, whether messy or neat. The lowest scoring variable was word-processed messy originals at 2.9 as compared to 3.4 for the word-processed neat originals. 80% of the word-processed messy original essays received lower scores than their handwritten originals, while 60% of the word-processed neat originals received lower scores than their handwritten originals. The fact that word-processed versions scored lower than their original counterparts was expected, but such a wide difference in scores was not. Using scores from both raters, as in a standard EPT assessment, 20% of the writers would find themselves with not just lower scores, but in lower-level ESL classes, simply because their essays happened to be word-processed.

Why did word-processed essays receive lower scores? A number of factors could lower the scores of word-processed essays: (1) writer's voice is more clearly perceived in handwritten work as opposed to word-processed work, (2) errors are easier to notice in word-processed essays, (3) raters may have higher expectations from word-processed essays and/or (4) word-processed essays seem shorter in length than their handwritten counterparts. Whether or not voice is easier to notice in handwritten essays is unknown as well as its effect on the scoring of an essay. Errors do seem to be easier to notice in word-processed essays, which is support by the data. Messy word-processed essays received lower scores 80% of the time, whereas neat word-processed essays received lower scores only 60% of the time. This could indicate the ease with which errors are discovered between neat and messy essays and their word-processed counterparts. Also, EPT raters, who are also classroom instructors, are used to grading word-processed essays in their classes rather than handwritten essays. This may result in higher expectations and lower scores for word-processed essays, since they appear to be finished works rather than works in progress. The most obvious difference between handwritten essays and word-processed essays is their length. Word-processed essays appear shorter than their handwritten counterparts with the same word-count when using average formatting (12-point Times New Roman or Arial font, double-spaced, with about 1" margins). This may lead the rater to infer lower ability due to a perceived lack of writing on the selected subject. Since perception plays such an integral part in holistic rating, a factor such as perceived length is a major concern in rating word-processed essays.

At present it seems that more research needs to be done on the effects of handwritten versus word-processed essays and the scoring of the EPT. This research concludes with two suggestions for future EPT training and development. The first suggest is that two writing options for the EPT should not exist at the same time. Were some test takers to handwrite their essays while others word-processed theirs, those who use a word-processor would be put at a disadvantage (20% of writers, as our study suggests). This could possibly be alleviated with training and calibration, but discrepancies would likely remain. If the test were to go to a completely word-processed format, raters would need to be re-calibrated using word-processed samples and the scale would possibly need to be reconsidered as well.

Further research will need to focus on a number of variables: transcription, rater training, why word-processed essays are rated lower than handwritten essays, and the effect of writing done on a word-processor rather than transcribed. The method of transcription seems to be the most influential factor in the rating of neat and messy essays. Our results indicate that no matter what the legibility, simply transcribing essays will improve scores. More attention should be paid to the exact appearance of the original and then reflect that in the transcription (i.e., cross-outs, smudges, letter and line spacing, pencil versus pen, and cursive versus print). Raters need to be made aware of these influential variables, before participating in a study. This training may affect the scores given, and if not, it would give us valuable insight into the effectiveness of current rater training. The reasons why word-processed essays receive lower scores than handwritten essays also need to be explored. More research should be conducted on the possibilities raised previously: teacher-raters seeing word-processed essays as finished products held to a higher standard and/or the perception that word-processed essays are shorter than handwritten essays. Finally, one of the most intriguing factors, which need to be studied with respect to word-processing versus handwriting, is the effect that composing on a word-processor has on the quality or rating of an essay. Transcriptions were an easy way to compare two identical samples, but the effect that composing on a word-processor has on the writing process is one that deserves further study.

A major concern in this study is the validity of a small sample size. There

were a total of 40 samples, 20 original handwritten and 20 transcribed essays.

A larger sample would have made a great difference in the validity of the findings.

Though, only four qualified raters were found to participate in this study.

The time needed for rating essays and the scarcity of available raters made

the rating of a larger number of samples impossible.

Another concern was the need for a third rating session for the legibility group.

Due to research error (the samples in session one and two were the same), the

second session had to be thrown out. This meant that the raters in this group

had already been informed of the scope of the experiment. Even though they had

never seen the samples from the third session, knowledge of the experiment's

purpose may have influenced the score that were given. No such error occurred

with the handwritten versus word-processed group.

Finally, legibility was judged arbitrarily. A review of the literature on handwriting

analysis yielded no definitive rating mechanism. The most practical was the

Ayres Measuring Scale for Adult Handwriting (Ayres, 1920), which is a comparative

scale with which to judge handwriting legibility. Other studies used group rankings

of essays to judge legibility. In the end, each of these methods are relatively

arbitrary, thus I decided that arbitrary judgments on handwriting quality would

be acceptable for this study.

Benchmarks for EPT composition scoring

(apply to graduate students)

updated 12/12/96

Too low (effectively places into ESL 400 [formerly 109])

--> insufficient length

--> extremely bad grammar1

--> doesn't write on assigned topic; doesn't use any information from the

sources2

--> majority of essay directly copied3

--> summary of source content marked by inaccuracies4

400 [formerly 109]

--> dropped sentences and paragraphs

--> whole essay doesn't make sense; hard to follow the ideas

--> poor choice of words

--> lack of cohesion at the paragraph level

--> grammatical/lexical errors impede understanding1

--> only summary/restatement of information in same order as source2

--> only uses article; no reference to information in video2

--> overt plagiarism: direct copying of passages3

--> poor understanding of source content

--> summary of source content contains inaccuracies, both major (concepts)

and minor (details)4

--> insufficient length

401 [formerly 111]

--> reasonable attempt at introduction, body, conclusion

--> has a main idea; more than restatement of article/video2

--> lack of cohesion at the essay level

--> some grammatical/lexical errors; essay still comprehensible1

--> summarizes/integrates information from both sources2

--> covert plagiarism: some attempts at paraphrasing3

--> summary of source content may contain a few minor (details) inaccuracies4

Exempt, or recommends 402/403 sequence [formerly 400/401]

--> excellent introduction, body, conclusion

--> cohesion at essay level

--> writing flows smoothly

--> grammatical/lexical errors do not impede understanding1

--> uses information from both sources to effectively argue thesis (explicit

or implied)2

--> no or minimal plagiarism (citation of source is desirable, but not necessary)3

--> summarization of source content should contain no major (concepts) inaccuracies4

Explication and Rationale for Benchmarks

In general, the task of the rater is to evaluate the student's ability to write an essay and use/synthesize source material.

NOTE: No single issue should be used to place a student in a certain level. A combination of factors should be used.

(1) Grammatical & Lexical Errors

Too Low: Extremely bad grammar; totally incomprehensible.

400: Grammatical/lexical errors impede understanding. Even after rereading, confused about what student means.

401: Some grammatical/lexical errors, but essay is still comprehensible. Might have to reread some sentences, but after rereading can basically understand what student means.

Exempt: Grammatical/lexical errors do not impede understanding. Don't have

to stop and reread parts to understand the essay. Types of errors few and tend

to be those easily corrected.

(2) Responding to Prompt

Too Low: Student writes about a topic other than assigned topic or fails to use any source of information. This demonstrates student either doesn't understand instructions or content or is unable to respond to an assigned topic. If student fails to use sources, raters cannot evaluate student's ability to use sources.

400: Student uses some information from sources, but writes on a topic unrelated or only remotely related to assigned topic (see above rationale). Student simply summarizes/repeats information in the order in which it originally appeared in the source(s), especially if student uses only the article as a source of information. In the case of repeating information in order, rater cannot determine student's ability to organize writing at either paragraph or essay level. In the case of student only using the article, it is difficult to evaluate the student's listening comprehension and ability to use and synthesize information from an aural source. Since 400 gives special attention to listening skills, students who fail to demonstrate listening ability should be placed in 400.

401: Student summarizes and/or integrates information from both aural and written sources. Student develops a main idea related to the topic and supports it with the sources. There may still be lack of cohesion at the essay level.

Exempt: Student skillfully uses information from both sources to effectively

argue his/her thesis (explicit or implied).

(3) Plagiarism

Too Low: Majority of essay is directly copied from sources without citation.

400: Essay contains overt plagiarism. Direct copying of passages/sentences doesn't allow rater to know student's true writing ability. When plagiarism is significant enough to hinder rater's ability to judge how well the student writes, he/she should be placed in 400. (Guideline: Therefore, if someone directly copies approximately 1/3 of the essay, he/she would be in 400.)

401: Essay contains covert plagiarism. There may be imperfect attempts at summarizing and paraphrasing and isolated incidents of direct copying of no more than a couple sentences. If the rest of the essay demonstrates student's writing is good, student can learn about plagiarism in 401.

Exempt: No or minimal plagiarism. Citation of source is desirable but not necessary.

(4) Accuracy of Content

Too Low: Summary of source content is marked by inaccuracies.

400: Summary of source content contains inaccuracies, both major (concepts) and minor (details).

401: Summary of source content should, on the whole, be accurate but may contain a few minor (details) inaccuracies.

Exempt: Summarization of source content should contain no major (concepts)

inaccuracies.

These are EPT essays. You should rate them as you would a regular EPT. Use

You will be given a set of ten EPTs to rate. These should be rated according

to the standard EPT rating criteria (too low, 400, 401, exempt). One exception

being that plus (+) or minus (-) indicators should be assigned to higher or

lower scores, respectively, in tests meeting the requirements for a 400 or 401

rating. Also, you are encouraged to write any comments on the tests that you

consider in your rating process (i.e., disorganized, awkward sentence/phrase,

grammar errors, etc…). I am particularly interested in the moment where

you first 'know' what level you will be assigning. This may change a couple

times within one essay.

This is a qualitative evaluation of EPT essays, so please feel free to write as much or as little as you'd like. There is no time limit for these ratings, but they should be done in one sitting, beginning immediately. Please refrain from discussing your ratings with other raters.

EPT Testing

You are invited to participate in a study of rater perception on the rating

of the ESL Placement Test (EPT) essays. You will rate EPT essays, giving detailed

annotations on you perceptions of the quality of the work. You may withdraw

from the study at any time for any reason.

You are asked to participate in a total of two sessions. Each session will last approximately two hours, for a total of about four hours. You will receive graduate pay rate ($13.82/hr for first year students and $14.42/hr for students after their first year). If you withdraw from the study, you will be paid for the time that you have completed.

Your performance in this study will be completely confidential. Your responses will be coded to be anonymous, and any publications or presentation of the results of the research will include only information about group performance.

You are encouraged to ask any questions that you might have about this study whether before, during, or after your participation. However, answers that could influence the outcome of the study will be deferred to the end of the experiment. Questions can be addressed to Daniel Craig (373-1438 or dacraig@uiuc.edu).

I understand the above information and voluntarily consent to participate in the experiment described above. I have been offered a copy of this consent form.

Signature: __________________________________________ Date: _______________

Example 1

Example 2

Example 1

Example 2